Google's AI Just Gave You a Filter Bubble While Defining One

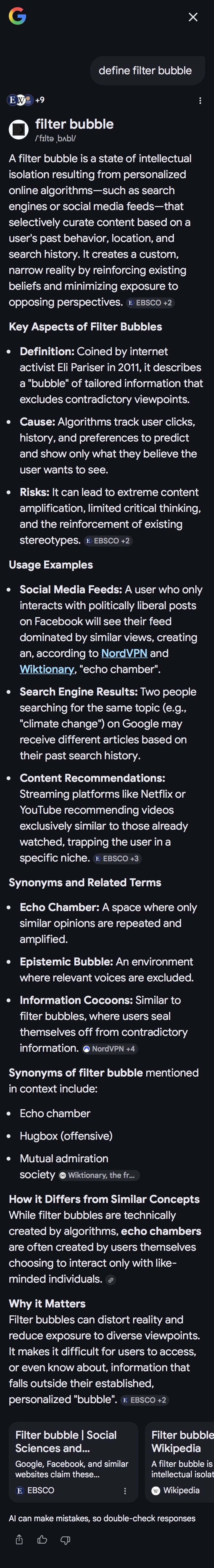

Search "filter bubble" right now and Google's AI will tell you it is "a state of intellectual isolation" that "creates a custom, narrow reality by reinforcing existing beliefs and minimising exposure to opposing perspectives." Its sources are EBSCO, Wikipedia, and NordVPN. A VPN company. It does not cite the Reuters Institute literature review that found no support for the hypothesis. It does not mention the Oxford, Stanford, and Microsoft study of 50,000 users that found the effect was modest and mostly driven by user self-selection rather than algorithms. It does not acknowledge that the research picture is contested. One answer, from an invisible source selection that includes a VPN company's marketing content, presented as the settled definition of a concept in information science.

Which is, depending on your definition, a filter bubble.

This is not a gotcha specific to this query. It is how AI-generated answers work across most topics. The model selects sources, synthesises them into a single coherent response, and presents the result without surfacing the contested terrain underneath. Sometimes the sources are excellent. Sometimes they are NordVPN. You generally cannot tell which, because the answer looks the same either way. For straightforward factual queries this is fine. For topics with genuine empirical disagreement, which includes most interesting questions, it is a problem. The answer you receive reflects the sources the model happened to weight, not the actual state of knowledge on the subject. That distinction matters, and AI results do not make it.

This is not an argument against using AI tools. It is an argument for treating AI-generated answers the way you would treat any single source: as a starting point, not a conclusion. Independent research, going to the actual studies, reading the actual papers, finding out who disagrees and why has not become less important because AI can now produce a fluent paragraph on any topic. It has become more important, because the fluency makes the gaps harder to see.

In 2011, Eli Pariser named the phenomenon: personalisation algorithms were quietly sealing people inside ideological bubbles, filtering out dissenting views, sorting the internet into separate factual universes. The term went everywhere. Politicians cited it. Regulators invoked it. Every piece about online polarisation mentioned it.

There was one problem. Researchers spent the next decade looking for the filter bubble and mostly could not find it.

The evidence did not support the strong version of Pariser's thesis. People were not ideologically sealed. They encountered content that challenged them. They used multiple sources. The algorithms were nudging, not enclosing. The filter bubble, as a description of how most people actually experienced the internet, was significantly overstated.

And yet the thing Pariser was actually worried about, invisible algorithmic selection determining what version of reality you see, without your knowledge or consent, is now genuinely happening. Just not through Facebook's news feed. Through your AI assistant. The mechanism is different. The effect is worse. And almost nobody is talking about it in those terms.

What the Research Actually Found

The filter bubble thesis generated a substantial body of empirical research. Most of it pushed back.

A 2016 study by Seth Flaxman (Oxford), Sharad Goel (Stanford), and Justin Rao (Microsoft Research) examined the browsing histories of 50,000 US news consumers. They found that social media does contribute to ideological segregation, but the effect was modest, and the majority of it came from user self-selection rather than algorithmic filtering. People were choosing ideologically similar content themselves. The algorithm was not the primary driver.

A 2022 literature review from the Reuters Institute for the Study of Journalism at Oxford, surveying the accumulated research, concluded that echo chambers are "much less widespread than is commonly assumed" and found "no support for the filter bubble hypothesis." Grant Blank at the Oxford Internet Institute, whose work surveyed adults in the UK and Canada, found that people consumed on average five different media sources and regularly encountered views they disagreed with. Three separate 2018 studies, in the US, Germany, and cross-nationally, looking specifically at search personalisation found only minor effects on content diversity. The German study explicitly concluded that results deny "the algorithmically based development and solidification of isolating filter bubbles" for search engines.

Even Pariser himself conceded that the internet was not entirely to blame.

What the research actually shows

Most people are not ideologically enclosed. They use multiple sources, encounter opposing views, and change their minds based on things they read. The filter bubble, as a description of the typical internet user's experience, does not hold up to empirical scrutiny.

This matters because the filter bubble became the dominant frame for thinking about algorithmic harm. Regulators focused on it. Platforms were pressured around it. A huge amount of academic and journalistic attention went into studying it. And the answer came back: the specific mechanism Pariser described, algorithmic filtering sealing people inside ideological enclosures, was not the main event.

That does not mean algorithmic systems are benign. It means the critique was aimed at the wrong target.

Where the Real Problem Was

While researchers were failing to find the filter bubble in news consumption patterns, internal platform documents were revealing something different and more concrete.

In 2016, Facebook researcher Monica Lee found that 64% of all extremist group joins on the platform were attributable to Facebook's own recommendation tools, specifically the "Groups You Should Join" and "Discover" features. The finding sat in internal documents until the Wall Street Journal reported it in May 2020, alongside a 2018 internal presentation slide that read: "Our algorithms exploit the human brain's attraction to divisiveness." Facebook's integrity teams proposed changes. Executives blocked most of them on the grounds that they were "anti-growth."

YouTube's chief product officer Neal Mohan told CES in January 2018 that algorithmic recommendations drove 70% of total watch time on the platform. Prior to reforms introduced in early 2019, that recommendation engine had a documented tendency to escalate users toward increasingly sensational and conspiratorial content, because that content performed well on engagement signals.

So the picture is more specific than the filter bubble thesis allowed for. Algorithmic recommendation was not sealing most people inside ideological bubbles when they browsed for news. It was, in particular contexts, group recommendations, video escalation, actively routing people toward extremist and sensational content because that content performed well on engagement metrics. The harm was real. It just operated through a different mechanism than Pariser described.

The filter bubble framing was too broad and too focused on personalisation as the problem. The actual problem was engagement optimisation producing harmful outputs in specific high-stakes contexts. Those are related but distinct critiques, and conflating them made both harder to address.

The Search Personalisation Question

Search is where the filter bubble framing most directly intersects with SEO, so worth being precise about the current state.

Google introduced personalised search for logged-in users in 2005 and extended it to everyone via anonymous browser cookies in December 2009, at which point your past 180 days of search history began influencing what you saw. By 2018, Google had reversed course considerably. The company stated that very little personalisation was happening for organic results beyond location and the immediate context of the prior query in the same session. Ranking, Google argued, was being driven primarily by the query itself.

Location-based variation is real and well-documented. The more granular behavioural personalisation that generated concern in the early 2010s has been substantially walked back for organic results, though it remains more active in the ads layer.

The practical implication for rank tracking: SERP variance from personalisation in organic rankings is smaller than it was a decade ago, but location still produces meaningful differences, and the picture is not uniform across query types or verticals. A rank tracking tool querying from a single location is giving you a contextualised snapshot, not a universal fact. For iGaming specifically, jurisdictional restrictions interact with location signals to produce additional variance. The SERP for "online casino UK" is not the same document for every user. But the personalisation driving that is mostly regulatory and geographic, not behavioural.

AI Answers: The Filter Bubble Made Structural

Here is where the argument turns. Because the thing the filter bubble debate was really about, invisible selection determining what version of information you receive, with no transparency about what was excluded, is now happening at scale. It just looks completely different from what Pariser described.

When Google's AI Mode generates a response, it does not show you the web. It shows you a synthesised answer constructed from a selected subset of sources, filtered through the model's training, shaped by the query context, and presented as a single coherent response. You receive a conclusion. You do not see the sources that were excluded. You do not see the framings that were not adopted. You do not see the questions the model chose not to address. The selection process is entirely upstream of your awareness.

The old SERP was imperfect. But it was legible. You could see ten results. You could evaluate the sources. You could notice that result three was from a site you had never heard of, click it anyway, and find the most useful answer. You had some visibility into the landscape. The editorial judgment, such as it was, was at least partially visible and negotiable.

AI synthesis removes that. The filtering has already happened before you see the output. One answer, one framing, one set of sources chosen by a process you cannot inspect. Pariser was worried about Facebook deciding which of your friends' posts you saw. That is nothing compared to a system that decides what version of a topic exists for you, constructed from sources you never see, in a process that cannot be audited.

The real filter bubble

It is not your social media feed showing you content you already agree with. It is an AI system constructing a single synthesised answer from an invisible source selection and presenting it with the implicit authority of a search engine. The bubble is not ideological. It is epistemic. And it is invisible by design.

The scale matters too. The filter bubble thesis worried about ideological self-selection affecting politically engaged users. AI answer synthesis affects every informational query, on every topic, for every user. It is not a niche phenomenon among partisan news consumers. It is the default mode of information retrieval for anyone using AI-assisted search, which is an increasingly large proportion of searches.

And unlike social media feed personalisation, which at least theoretically reflects your own preferences back at you, AI synthesis does not even have that partial justification. The selection is not based on what you have previously engaged with. It is based on what the model was trained on, what sources it deems credible, and how it interprets the query. Those are not your preferences. They are the model's, or more accurately, the choices of whoever built and trained it.

What This Means for Search Practitioners

The practical implications of this shift are significant and not yet fully priced into how most SEO strategy is conceived.

On personalisation: as established, Google has reduced organic search personalisation substantially. Location matters. Behavioural profiling matters less than it did. Your rank tracking is giving you a broadly representative picture for a given location, with caveats around query type. The filter bubble concern that occupied so much attention in the 2010s, divergent organic SERPs driven by user behavioural profiling, is probably not your main problem in 2026.

Your main problem is structural concentration. AI-generated answers are capturing an increasing share of informational queries. The traffic that used to distribute across multiple results result one, two, three, the featured snippet, the forum thread someone found useful is being absorbed by a single synthesised response. The competitive landscape is not ten ranked results. It is: are you a source the model selects when it constructs its answer, or are you not.

The criteria for that selection are not fully transparent. Demonstrably credible sources with strong E-E-A-T signals, high topical authority, and well-structured content appear to have an advantage. But this is inference from observed outputs, not published specification. You are optimising for a system that does not show its work.

For iGaming, the complication is regulatory. AI models are cautious about gambling content in ways that are inconsistent and not always well-calibrated to jurisdictional reality. A synthesised answer about "best online casinos" may not be generated at all for certain queries, or may be heavily caveated in ways that disadvantage commercial affiliates. The filter in AI Mode is not ideological. But it has commercial and regulatory dimensions that affect the iGaming vertical specifically.

The Conclusion Pariser Should Have Reached

The filter bubble was the right worry pointed at the wrong mechanism. Pariser was correct that invisible algorithmic selection over what information people receive is a serious problem. He was wrong about how it would manifest. Facebook's news feed was not, in the end, the main vehicle. The research is fairly clear on that.

But the concern was not misplaced. It was premature. The information environment in 2011 was still broadly legible. Multiple sources, visible results, user agency intact. The algorithmic influence was real but partial. You could still see the shape of the web you were navigating.

In 2026, AI intermediaries are doing to information retrieval what Pariser thought social feeds were doing to news consumption and doing it more completely, more invisibly, and at far greater scale. Every informational query now has the potential to be answered by a system that has already made all the editorial decisions before you see the result. Which sources count. What the answer is. What the alternatives are not.

That is the filter bubble. It just took fifteen years and a different technology to actually arrive.

Key Takeaways

- The filter bubble thesis, as Pariser originally described it, is not well-supported by the empirical research. Echo chambers are less widespread than assumed. Algorithmic filtering of news feeds is not the primary driver of ideological segregation.

- The real documented harms from recommendation algorithms were more specific: Facebook routing users to extremist groups (64% of joins per internal research), YouTube's pre-2019 escalation toward conspiratorial content. Engagement optimisation producing harmful outputs in specific contexts not broad ideological enclosure.

- Google's organic search personalisation has been substantially reduced since 2018. Location is the dominant remaining variable. The SERP variance you need to account for is mostly geographic and jurisdictional, not behavioural.

- AI-generated answers represent the filter bubble made structural. One synthesised response, invisible source selection, no legible alternatives. Qualitatively different from and worse than what the original filter bubble thesis described.

- For SEO in 2026: the personalisation concern of the 2010s has receded. The structural concentration concern has intensified. Being selected as a synthesis source is the new competition, and the selection criteria are not fully transparent.

- For iGaming specifically: AI models have inconsistent caution around gambling content. The filter is not ideological but it has real effects on visibility.

Sources

- Pariser, E. (2011). The Filter Bubble: What the Internet Is Hiding from You. Penguin Press. Watch the TED talk.

- Horwitz, J. and Seetharaman, D. (2020). "Facebook Executives Shut Down Efforts to Make the Site Less Divisive." Wall Street Journal, 26 May 2020.

- Neal Mohan, YouTube CPO, CES panel, January 2018. Reported by Quartz and New America Foundation.

- Flaxman, S., Goel, S., and Rao, J. (2016). "Filter Bubbles, Echo Chambers, and Online News Consumption." Public Opinion Quarterly, 80(S1), 298-320.

- Ross Arguedas, A., Robertson, C., Fletcher, R., and Nielsen, R. (2022). "Echo chambers, filter bubbles, and polarisation: a literature review." Reuters Institute for the Study of Journalism, University of Oxford.

- Fletcher, R. (2020). "The truth behind filter bubbles: Bursting some myths." Reuters Institute for the Study of Journalism.

- Haim, M., Graefe, A., and Brosius, H. (2018). "Burst of the Filter Bubble?" Digital Journalism, 6(3), 330-343.

- Google Blog (2009). "Personalized Search for everyone." 4 December 2009.

- Sullivan, D. (2018). Statements on reduced organic search personalisation. Search Engine Land.